Why I flipped

Engineering is all about problem solving. Even though I’ve always strived for interactive lectures in the past, I still felt that my students were too passive. I wanted a more active classroom where students would work collaboratively to solve problems. This is a hard sell for Freshmen, however, a necessary skill for professional engineers.

Technology Tools

Screencast Software

Flipping requires that the “facts” be acquired out of class so that the “applications” can be performed in class. I struggled with how to put my lectures online and still retain my active “questioning” style. Straight videos were unsatisfactory since I couldn’t stop and get the student to perform an example problem of the topic just discussed. My attempt at a solution is to use a software tool called Captivate (an Adobe product). A similar product is Camtasia. Both of these tools allow you to embed interactive questions in the lecture. An example of one of my lectures is shown here.

2016 Improvements

One of the difficulties in making screencasting is the voice over. This is especially problematic when the script changes. For all of my course lectures, I recorded my voice reading the slide script. Any change of script required that I re-record the entire script for that slide. This is very tedious. Recently, I went to an ASEE conference where a presenter showed me how to use the Adobe Captivate speech management tool which will convert text to speech. The speech is actually pretty good considering that it is computer generated.

Blackboard

EE102 is served through Blackboard for no other real reason but that our university uses this learning management system. As with all tools Blackboard has it’s pros and cons. I like the the way Blackboard facilitates (read “forces”) me to maintain a structured class. I can serve all course materials, i.e. Captivate lectures, Excel homework Blackboard homework, Google doc lab data links. I like how I can have the students upload their lab reports and have them graded online. I really don’t like how Blackboard performs assessments and neither do my students. It is clunky at best. I had hoped to use this feature for online homework, but failed miserably.

2015 – Blackboard Update

In Spring 2015 I successfully moved to using Blackboard tests for online homework! This transition occurred through a brain shift. In the past I used one test for one homework set, i.e. all the homework questions were contained in one test. The difficulty with this approach is that if the students miss one question in the homework and want to re-try they would have to do the entire test (all homework questions) again. This was tedious in the extreme. It was suggested to me by another faculty that if I put only one homework question in a test and then had several tests that students would be able to retake each test independent of the others and so only retake those questions that they originally failed at. Hit hand against head! This solution was so obvious that it required someone else to point it out to me!

Excel Programmed Homework – RETIRED 2015

I still believe in immediate feedback for freshmen, but have transistioned in 2015 to using Blackboard tests for questions. See Blackboard section.

![]() It is important for freshmen to have immediate feedback on whether or not they are understanding a concept and performing a calculation correctly. Flipping allows me to be present when students are confused or stumble on problems. Additionally, the programmed excel homework allows the student to know when they are performing the calculation correctly and therefore prompt them to seek help. Shown here is an example homework.

It is important for freshmen to have immediate feedback on whether or not they are understanding a concept and performing a calculation correctly. Flipping allows me to be present when students are confused or stumble on problems. Additionally, the programmed excel homework allows the student to know when they are performing the calculation correctly and therefore prompt them to seek help. Shown here is an example homework.

Google Docs

Collaboration and teamwork are important skills for new engineers to learn. The labs in EE102 are structured so that the lab data acquired from all students are compiled through a google docs spreadsheet. Students then write their lab report using the compiled data set. This leads to a richer data set from which the students can discuss measurement precision and measurement error. Additionally, since data is uploaded real-time, students can assess whether or not their measured data is approximately the same as the rest of the data. When their data is vastly different than the class data, they are prompted to discover why. This triggers a level of troubleshooting which has previously been non-existent.

In Class

Assessment

I use a number of assessment tools for this class:

- Pre- post- concept inventory test: online multiple choice concept test given at the beginning of the class and the end of the class. Assesses concept learning gain.

- Exams: two one hour exams and one comprehensive final. Assesses content knowledge.

- Survey: this year we will give an end of year survey to assess attitude, i.e. how did the students feel about the “flipped” paradigm. This survey is also attempting to measure development of social capital.

Concept Inventory Test:

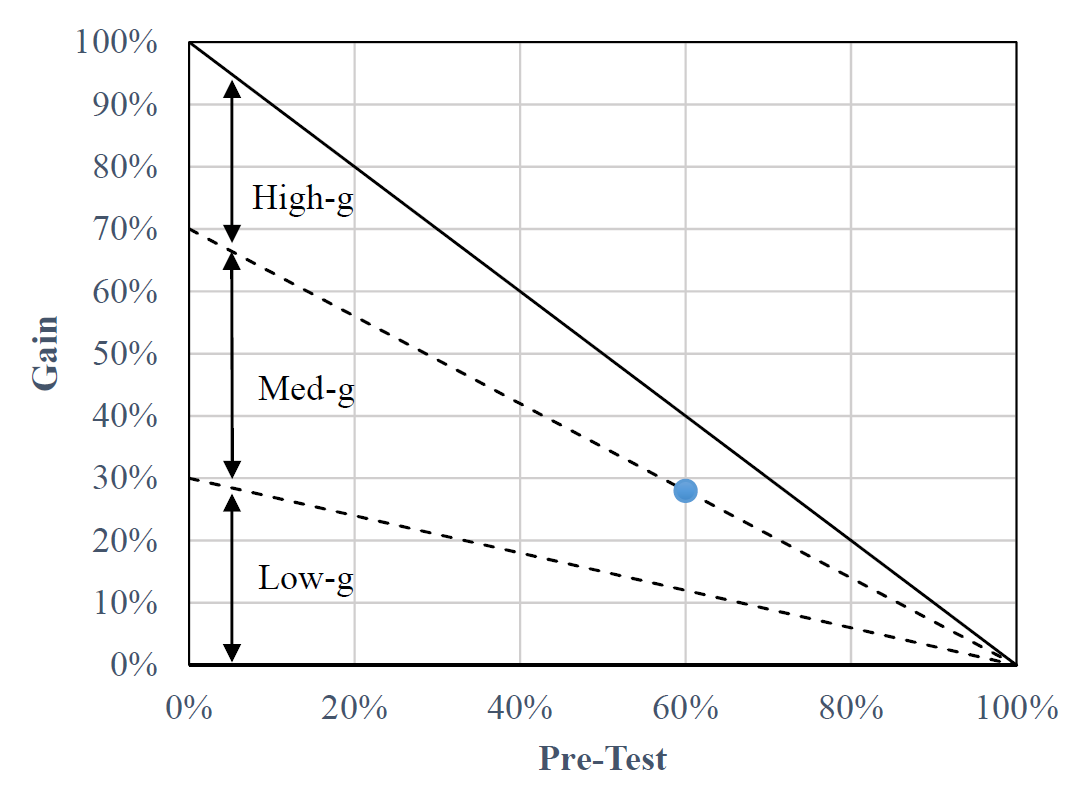

In 2007 I administered a circuit concept inventory test to 15 students as a pre/post-test. The blue marker in the figure above shows the average learning gain achieved by those students. This figure was created in the manner of Hake (1998). Lines indicate maximum possible gain (solid line) and transitions (dashed lines) between low gain, medium gain, and high gain as defined by Hake. I am again administering the pre/post concept inventory test to the 37 students that I have this semester. Watch for an update of this figure to reflect the learning gains for this flipped course.

In 2007 I administered a circuit concept inventory test to 15 students as a pre/post-test. The blue marker in the figure above shows the average learning gain achieved by those students. This figure was created in the manner of Hake (1998). Lines indicate maximum possible gain (solid line) and transitions (dashed lines) between low gain, medium gain, and high gain as defined by Hake. I am again administering the pre/post concept inventory test to the 37 students that I have this semester. Watch for an update of this figure to reflect the learning gains for this flipped course.

Exam 1 Results (updated 2015):

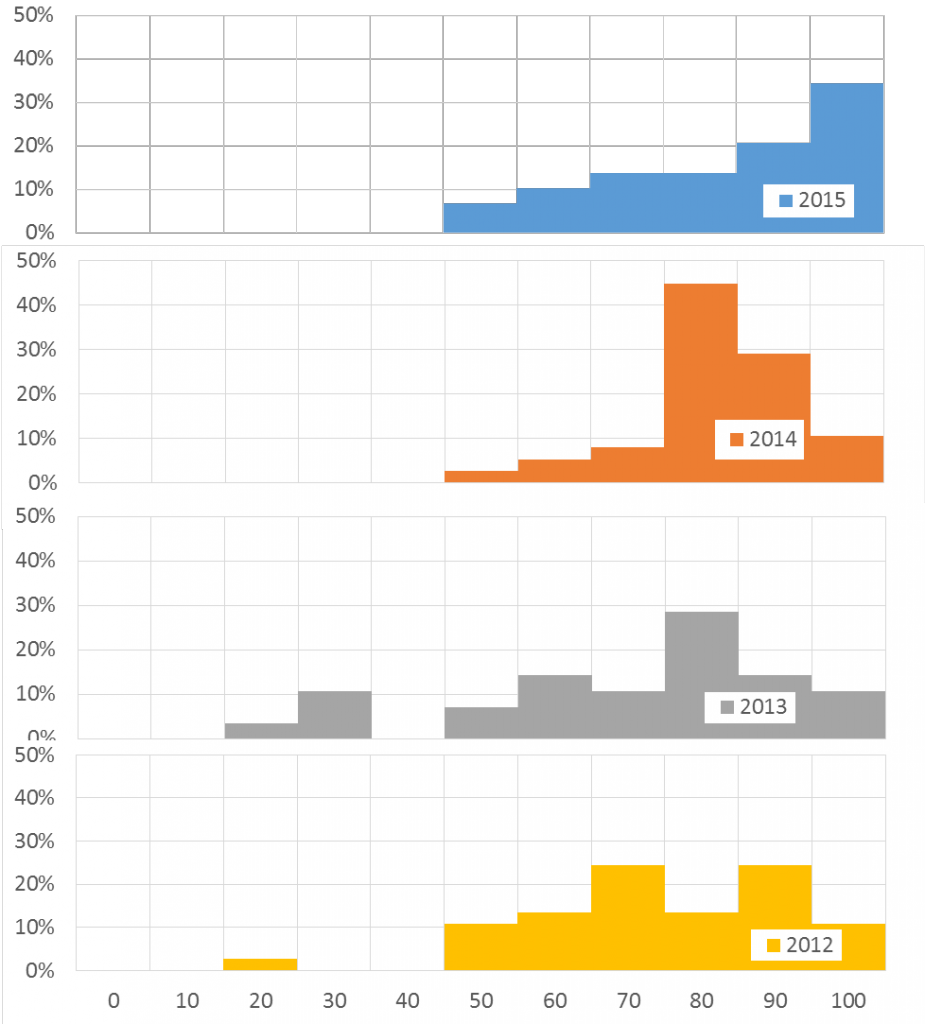

Shown here is the result of the first exam given in years when I conducted my regular lecture (2012 and 2013), my first year (2014) and second year (2015) trying out the flipped paradigm.

In the pre-flipped years (2012-2013) you see a double peak distribution. I’ve found that this is typical in my freshman class. The students have a wide range of skills coming into the class as represented by their standing in their math sequence. The co-requisite for the class is Calc1, however, there are students who range from Calc1 through having already passed DiffEQ. I suspect that the students that I’m teaching are those in the lower score peak. The higher score peak students are those who would “get it” no matter what I did. The previous two years are also characterized by a broad distribution of scores, with some so low I suspect those students were not engaged at all.

In contrast, the 2014 distribution shows a single primary peak with half the standard deviation of the previous two years. The improvement is even more dramatic in 2015 where 34% of the class scored above 90 in the exam. This is compared to only 11% of the class scoring above 90 in each of the past three years.

What appears to have happened is that the students who have struggled in the past have acquired whatever support they needed through this flipped paradigm and have dramatically improved their understanding (as measured by the exam).

| Exam 1 Statistics | 2015 | 2014 | 2013 | 2012 |

| Average | 79 | 78 | 66 | 70 |

| Median | 83 | 78 | 71 | 70 |

| Standard Deviation | 17 | 11 | 21 | 17 |

| %students>90 | 34 | 11 | 11 | 11 |

Exam 2 Results (updated 2015):

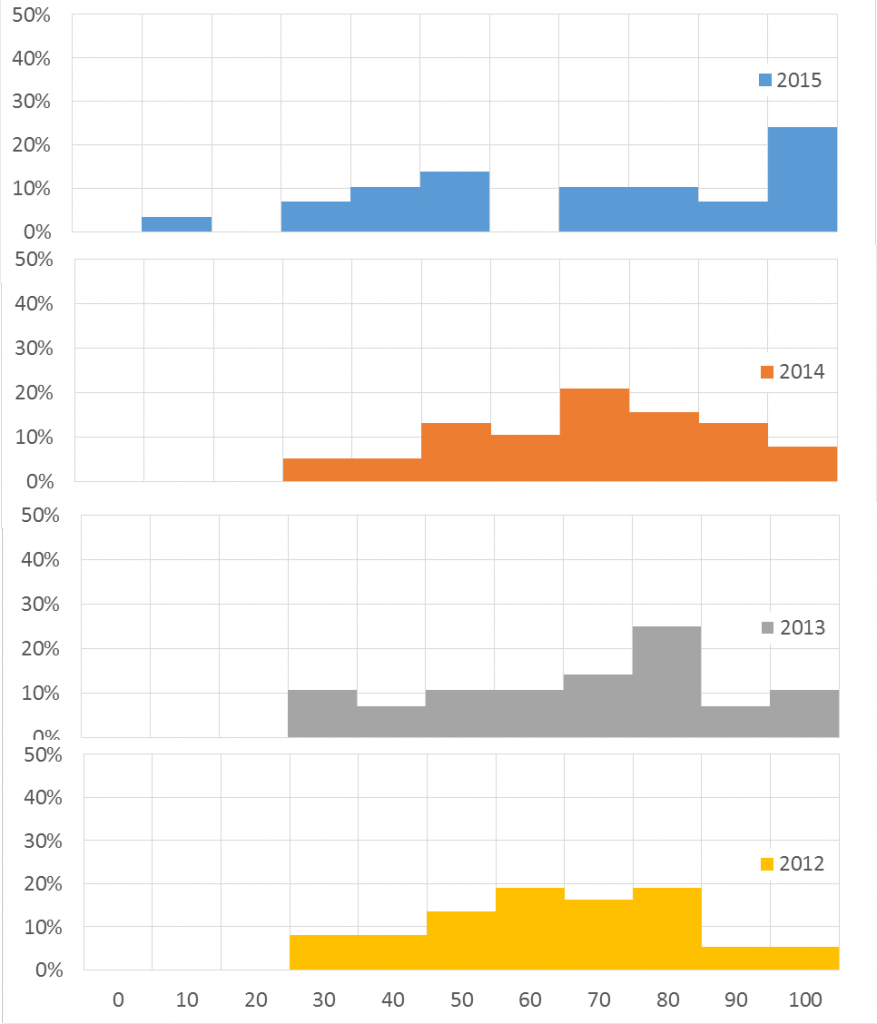

Shown here is the result of the second exam given in years when I conducted my regular lecture (2012 and 2013), my first year (2014) and second year (2015) trying out the flipped paradigm.

Exam 2 is the hardest of the exams given in EE102. In Exam 1 the students can still rely to some extent on prior knowledge. Exam 2 represents, for most students, entirely new material. The exam scores show a broad distribution, standard deviation approximately 20 for all years. I was disappointed in the results from 2014, although at the time I could tell that my execution of the in-class portion of the class was not going well. Students had stopped coming to class. Why come to class if everything is done online? In 2014, the students didn’t perceive the value in working problems in class. When they came to class they had not previewed the material and were therefore not prepared for the in class work. This was very frustrating for me and I think also for the students.

In 2015, I instituted two changes (i) points were given if they came to class and participated; (ii) students were required to submit a pre-class assignment that needed to be completed prior to coming to the first class of the module. The net result was that more students attended class and they were better prepared. The result from Exam 2 shows that success. As with the first exam in 2015 more students scored above 90 (28%) than in the previous three years (~10%).

| Exam 2 Statistics | 2015* | 2015 | 2014 | 2013 | 2012 |

| Average | 68 | 65 | 65 | 63 | 59 |

| Median | 71 | 65 | 68 | 64 | 60 |

| Standard Deviation | 26 | 29 | 20 | 22 | 19 |

| %students >90 | 29 | 28 | 9 | 11 | 6 |

* In 2015 there was one student who did minimal homework (15%) and had limited participation in class. That student choose to take Exam 2 but was significantly less prepared that other students in this class. The 2015* column shows the statistics for Exam 2 if this student’s score was removed.

Final Exam Results (updated 2015):

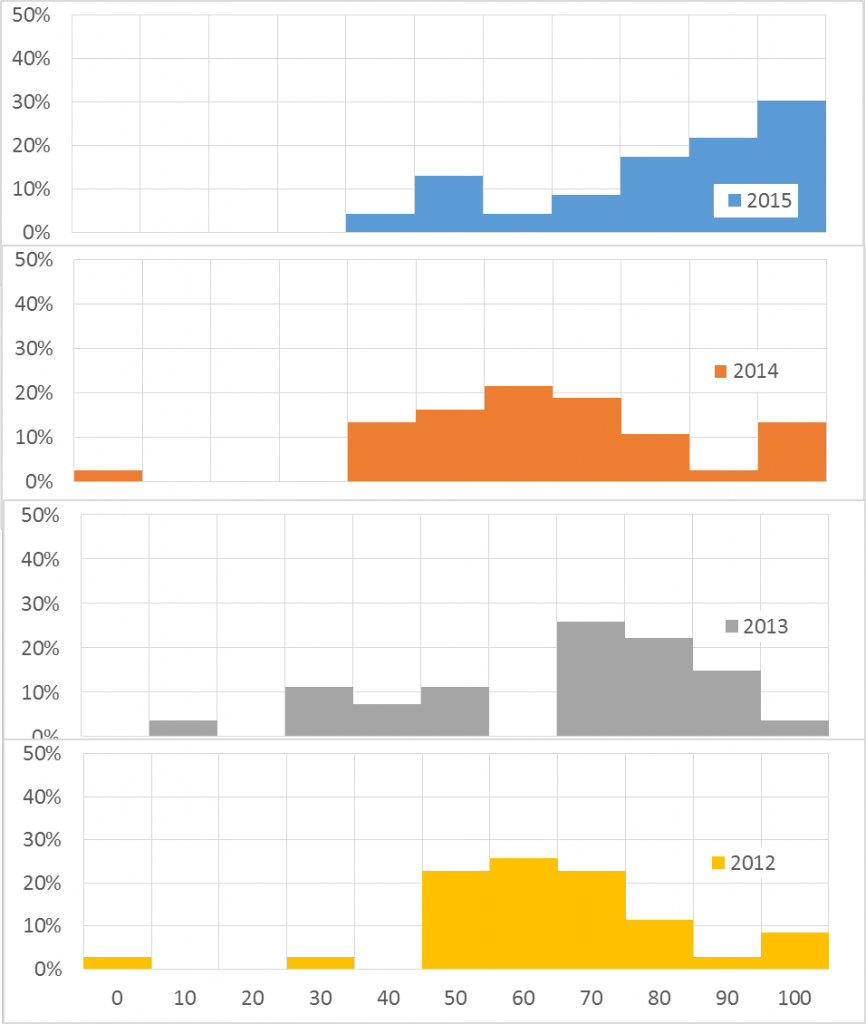

Shown here is the result of the final exam given in years when I conducted my regular lecture (2012 and 2013), my first year (2014) and second year (2015) trying out the flipped paradigm.

Shown here is the result of the final exam given in years when I conducted my regular lecture (2012 and 2013), my first year (2014) and second year (2015) trying out the flipped paradigm.

The Final Exam shows the same trend as the previous two exams. Specifically the percentage of students scoring higher than a 90 (30%) has significantly increased compared to the previous three years!

| Final Exam Statistics | 2015 | 2014 | 2013 | 2012 |

| Average | 77 | 60 | 59 | 59 |

| Median | 81 | 57 | 67 | 60 |

| Standard Deviation | 20 | 21 | 24 | 19 |

| %students>90 | 30 | 14 | 4 | 9 |

What I’ve Learned (updated 2015)

Really this section should be “What I am Learning!”. Spring 2014 is the first semester where I’ve tried the flipped paradigm. It has been a challenge, full of frustrations and some successes. Based on the experience of 2014 I made several changes to the way the class is conducted. Specifically:

Changes (2015):

- Preparing the students! It was clear in 2014 that the students were resistant to the flipped classroom paradigm. For 2015 I made a special effort to prepare the students for what they were about to experience and provide them with information on why it was important that they participated. Specifically:

- I sent the pre-class email to introduce them to what would be expected during the semester.

- On the first day of class I let them know again how the class would be conducted and why. Specifically, I let them know that research shows that they will learn more and retain more through this process.

- For every exam, I showed them the historical results and their current success. This positive feedback enhanced the students enthusiasm for the process.

- Enforce preparedness! It was clear in 2014 that the students procrastinated until the last possible minute and only did that part of the work that would impact their grade. The lack of preparedness in 2014 minimized any impact of the in class work.

- In order for the students to be prepared, I had to be prepared! Prior to the start of the 2015 semester, I overhauled my Blackboard site. I totally restructured the site and included for each module a pre-class assignment, class lectures/chapter, homework. I also included a calendar on the front page which indicated when everything was due.

- I enforced students preparing for the class by assigning a graded pre-class assignment due on the first day of the module. Then, on the first day, I would ask the class what they knew about the current topic and have them summarize for me what the module content was.

- I also explicitly graded attendance. 1pt per day to count towards participation in the class.

- Enforce collaboration! In 2014, the students did not work together during class time. Those that did worked only with their friends.

- During 2015, students were explicitly paired up. The pairing was defined by me and changed for each module. This means that students were required to work with all the other students in the class at some point during the semester.

- Even with this forced pairing students still tended to “parallel play”. In other words, they would work by themselves, side-by-side with their partner. During the class time I had to frequently remind them to work together, to make sure their partner knew how to work the problem. As the semester continued this became less of an issue, but was always present.

Successes (2014):

- I managed to actually finish all of my Captivate lectures! It was exhausting and I’m hoping that Spring 2015 will be easier because this is done.

- Responsibility for learning was successfully transferred to the students! The students fought this since they were used to the standard lecture format. They seemed to believe that if I just told them the answer they would somehow miraculously have “learned” it. It wasn’t until after the success of the first exam and they were willing to believe that they would learn better if they took responsibility for the learning.

- I got through all the material! I had read that flipping allowed for more material to be covered. In the past I had always had to rush at the end of the semester or not cover material. The structure of a flipped classroom allowed me to keep pace and not be derailed by a few students who struggled. They had the lectures available 24/7 and could repeat them as often as they needed.

- The class had small successes in sharing lab data. This led to a richer data set where we could talk about measurement errors and measurement variances. In some cases, students found problems with their measurements real-time by comparing their measurements with the class’s. They were then able to correct those problems while still in lab. Collaborating on lab data was never possible in the past. I saw this as a huge success. However, I also recognize that I need to work at making this more structured. This will be a prime area to work on in Spring 2015.

Frustrations and what to do about them:

- Although I sent a pre-class email letting the student know that we were flipping the classroom, they really were not prepared for the actual flip. They did not really get the connection of why this was important and what their responsibility was. PREPARING THE STUDENTS IS PARAMOUNT! This means telling them specifically how flipping will impact their learning, that they have the responsibility to ensure their success.

- The students in this class did what most students do, they procrastinated until the last possible minute and only did that part of the work that would impact their grade. This means that since I did not “grade” whether or not the watched the online lectures, the students were not prepared for the in-class activities. I did give mini-quizzes in class to provide formative feedback to the students on whether or not they understood the material. However, since the quizzes provided no points, other than the fact that the student was in class and took the quiz, the students were not prepared and so the formative feedback was nullified. As discussed in Love et al. (2014), I will now give the students an online quiz to assess class preparation and the quiz will be graded! Something to look forward to for Spring 2015.

- When given the opportunity to work collaboratively in class, the students did not group up! With the exception of a few, the students continued to work by themselves. One of the major reasons for me to flip my classroom was the perceived benefit of peer-to-peer instruction and the increase of social capital. Since the students did not on their own seek to work in groups, I will need to force this in the future.

This material is based upon work supported in part by the National Science Foundation under Grant Number DUE-1245815. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author and do not necessarily reflect the views of the National Science Foundation.